Should All-Cause Mortality Be the Gold Standard in Prevention?

Mar 29, 2026

All-Cause Mortality Is Not Always the Best Standard for Preventive Medicine

Every day on social media I get asked, "But what about all-cause mortality?". These Medfluencers and Healthfluencers seem to think that unless a study shows a reduction in All-Cause Mortality (ACM), then it is not a good study. They won't accept that it reduces heart attacks, strokes, non-fatal heart attacks, because they think you can "fudge" those numbers. But they think you can't fudge nor manipulate all-cause mortality. While this is true, it is the wrong way to look at this.

This is a quandary and logical fallacy. They think that you must demonstrate a reduction in all-cause mortality for ALL therapeutics. A lot of online cholesterol disputers use this argument thinking that it will hold up. But it doesn’t.

Firstly, powering for all-cause mortality can be very time consuming, very expensive, and nearly impossible. If you want a study powered to show a 10-20% reduction in all-cause mortality, you will need 20 times more participants and need to follow them for their entire lives.

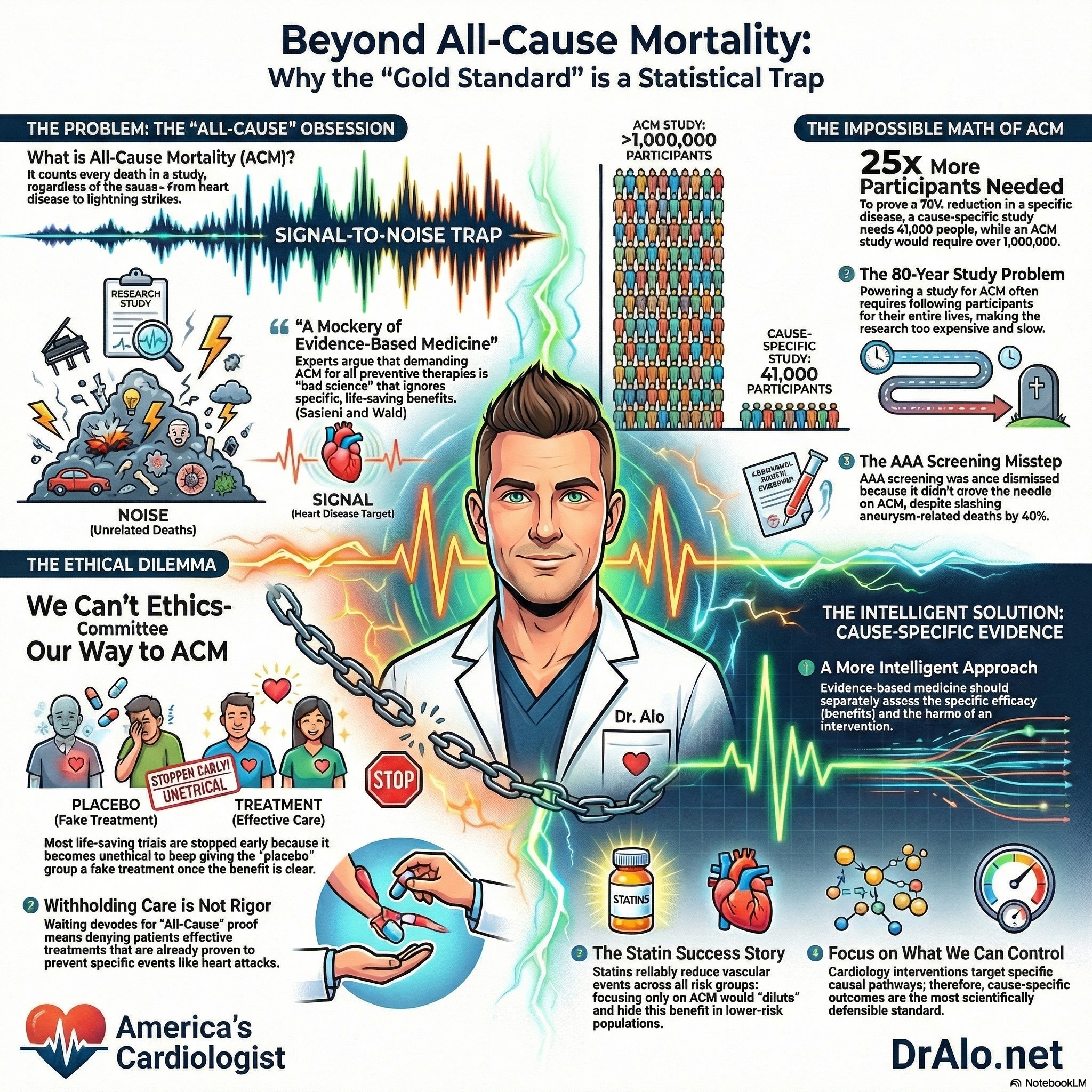

Infographic Summary On Chasing All Cause Mortality:

Ethics Of All Cause Mortality Cardiovascular Trials

Imagine we wanted to see if intervention X reduced all-cause mortality. We would need to enroll 100,000 participants and give them X for the rest of their lives. This would be an 80-100 year study. Sure, you could end it early after you have a few data points on death rates, but is that ethical? And if you end it early, it’s no longer powered to show a reduction in all-cause mortality. That’s what happens in research.

The ethics of powering a study for all-cause mortality is very debilitating. You must withhold the intervention from half the participants or keep giving them the placebo for decades. This is unethical. Some will die, some will have strokes and heart attacks that could have been prevented.

This is why many studies end early. Because it was unethical to withhold the intervention from the placebo group. Many studies were supposed to go on for 5 years but were stopped after 13 months by the ethics committee.

The Definitive Publication On All Cause Mortality

Circulation published a great paper on “Should a Reduction in All-Cause Mortality Be the Goal When Assessing Preventive Medical Therapies?” by Sasieni and Wald.

To quote from the paper, “More recently, some have argued that such therapies should not be used unless they have been shown to reduce all-cause mortality. Here, we argue that such an approach is bad science and makes a mockery of evidence-based medicine”.

Further they argue,

“Cardiovascular therapeutics should aim to reduce mortality, but it is not necessary to demonstrate a reduction in all-cause mortality when assessing them. It is, in general, misguided to attempt to demonstrate such an effect because it is too crude a measure of either benefit or harm. Results are dominated by causes of death unrelated to the intervention—the signal-to-noise ratio is low. Most effective interventions in public health and preventive medicine that have together led to substantial improvements in life expectancy would not have been introduced had policymakers required direct evidence of an impact on all-cause mortality.

Another problem with using all-cause mortality is that the impact of an intervention on all deaths will depend on the underlying risk of those diseases that it affects and is likely to be different in different populations. Many interventions have a relative impact on a disease that is similar to and independent of the underlying risk of the disease. Suppose, for instance, an intervention reduces the risk of dying from A by 30% (HR=0.7) but increases the risk from B by 100% (HR=2.0) (see Figure C). Then, if death from A is 3 to 4 times as common as death from B, the benefits and harms are finely balanced (population 1), but in a population where A is 40 times more common than B (population 2), the benefits greatly exceed the harms in terms of all-cause mortality. Comparison of coronary artery bypass grafting with medical therapy in patients with heart failure provides a good example. The randomized controlled trial found that coronary artery bypass grafting has a consistent beneficial effect on cardiovascular mortality regardless of age and no effect on noncardiovascular mortality, but it somewhat surprisingly emphasized that coronary artery bypass grafting has a more substantial benefit on all-cause mortality in younger compared with older patients.”

They concluded,

“Evidence-based medicine should not be about withholding interventions until they have been proven to reduce overall mortality. It should be analytic and intelligent, assessing the efficacy and harms of an intervention separately, and then applying these estimates to populations that might be offered the intervention, taking all evidence into account, including an assessment of whether it provides reasonable value for money.”

With that said, there have been plenty of cardiology trials that have shown a reduction in disease specific causes as well as all-cause mortality.

This is more important in the statin debates and discussions. As you read in other parts of this blog, statin medications absolutely reduce all-cause mortality.

“Did It Prevent All Deaths?” Is the Wrong Question in Preventive Cardiology

Again, when evaluating whether a preventive therapy works, it seems intuitive to ask the most sweeping question possible: did it reduce the overall risk of dying? If a medication or screening program cannot demonstrably lower all-cause mortality, the argument goes, why use it at all?

Sasieni and Wald push back hard on this logic in their 2017 Circulation article. Their core argument is straightforward but important: demanding all-cause mortality as the primary endpoint for preventive therapies is not rigorous science, it is a statistical trap that obscures real benefits and distorts clinical decision-making.

The Signal-to-Noise Problem

The central statistical critique is one of sensitivity. Any given preventive intervention targets a specific cause of death, but an all-cause mortality analysis lumps that cause together with every other way a person might die, cancer, accidents, infections, organ failure, and more.

When the condition being prevented is responsible for only a fraction of total deaths, even a highly effective intervention produces only a small movement in the all-cause mortality number. The signal gets buried in the noise.

Sasieni and Wald illustrate this with a striking calculation. Suppose a disease is responsible for 5% of all deaths in a population, and a new intervention prevents 70% of deaths from that disease. To demonstrate a statistically significant reduction in all-cause mortality, a randomized controlled trial would need to enroll over 1 million participants. Using cause-specific mortality as the endpoint instead, that same trial would need only about 41,000 participants, more than 25 times fewer.

There is simply no scientific justification for demanding the larger, costlier, and more ethically burdensome trial when cause-specific evidence is both achievable and sufficient. It's too expensive and unnecessary. That's why we have science and statistics. So we don't have to expose everyone to danger (or withhold benefit).

A Real-World Example: AAA Screening

The authors point to abdominal aortic aneurysm (AAA) screening as a concrete case where fixating on all-cause mortality led to a misleading conclusion. The U.S. Preventive Services Task Force summarized the evidence by noting that AAA screening had “little or no effect on all-cause mortality”, framing that implies the intervention may not be worth doing. But Sasieni and Wald argue this framing is scientifically indefensible.

The hazard ratio for aortic aneurysm deaths among screened patients was 0.60, a statistically powerful result, and there was no evidence that screening caused harm or elevated deaths from any other cause. Those two facts together, meaningful disease-specific benefit and no evidence of harm, constitute a compelling case for the intervention. Dismissing it because the all-cause mortality result did not reach significance is, in their words, both “scientifically wrong and unethical.”

The Population Dependency Problem

A second problem with all-cause mortality as an endpoint is that its apparent effect size varies depending on the population studied, even when the intervention’s actual biological effect is constant. The authors walk through a hypothetical in which a therapy reduces mortality from one disease by 30% but doubles the risk of dying from a different, rarer condition. In a population where the target disease is moderately common, the benefits and harms roughly cancel out in all-cause terms.

In a population where the target disease is far more prevalent, the intervention looks clearly beneficial. The therapy’s underlying pharmacological effect has not changed, only the population’s disease mix has. Using all-cause mortality as a universal benchmark therefore produces results that cannot be generalized across populations, undermining one of the core goals of evidence-based medicine.

The Statin Example: Burying Benefit Across Risk Groups

The Cholesterol Treatment Trialists’ Collaboration data on statin therapy makes this point vividly in a real clinical context. Statins reliably reduce major vascular events across all levels of baseline cardiovascular risk, the relative risk reduction is roughly consistent regardless of whether a patient is low-risk or high-risk.

But the impact on all-cause mortality is not consistent across those same groups, because all-cause mortality blends together vascular deaths (which statins reduce) with non-vascular deaths (which statins do not affect).

In lower-risk populations, non-vascular deaths make up a larger share of total mortality, so the statin benefit is diluted and harder to detect. This does not mean statins are less effective in lower-risk patients, it means all-cause mortality is the wrong lens through which to evaluate them.

Serious Harms Still Matter

It is worth noting that Sasieni and Wald are not arguing that adverse effects should be ignored. Their position is that cause-specific endpoints are the correct primary measure of efficacy, while serious harms are tracked separately and factored into an overall assessment of benefit versus risk. The early history of breast cancer radiotherapy illustrates this well.

Initial trials showed a clear reduction in locoregional tumor recurrence alongside an unexpected increase in cardiac deaths, resulting in no net benefit overall. This harm was identified and addressed: as radiotherapy techniques improved and cardiac radiation exposure decreased, the harm-benefit ratio shifted in favor of the treatment.

Had researchers evaluated radiotherapy only through the lens of all-cause mortality from the beginning, an ultimately valuable treatment might have been abandoned prematurely.

What Evidence-Based Medicine Should Actually Look Like

The authors close with a pointed reframing of evidence-based medicine itself. The discipline should not function as a gatekeeping exercise that withholds interventions until they survive the bluntest statistical test imaginable. Instead, it should be what the name implies: analytic, intelligent, and comprehensive, assessing the efficacy and harms of an intervention separately, applying those estimates to relevant populations, and incorporating value considerations.

Requiring proof of all-cause mortality reduction before acting on clear cause-specific benefit is not methodological rigor. It is methodological overcorrection that can deny patients effective care.

Challenging The Cholesterol Deniers

Peter Sasieni and Nicholas Wald challenge a common idea in modern evidence-based medicine: that preventive therapies should only be considered worthwhile if they are proven to reduce all-cause mortality. Their central argument is that this standard sounds rigorous, but in practice it is often scientifically misleading.

For many preventive treatments, the real benefit is best seen in cause-specific outcomes, such as fewer cardiovascular deaths, fewer heart attacks, or fewer aneurysm ruptures, rather than in overall death from every possible cause combined. Because all-cause mortality includes many deaths unrelated to the treatment being studied, it can blur or even hide a therapy’s true benefit.

The authors explain that all-cause mortality is often too blunt a measurement tool. On page 1, they note that when unrelated causes of death are mixed together with the disease the treatment is actually targeting, the “signal-to-noise ratio” becomes very low.

As a result, a genuinely effective intervention may appear unimpressive simply because its benefit is diluted by deaths it was never expected to prevent. They illustrate this with abdominal aortic aneurysm screening: even when screening clearly reduced aneurysm-related deaths, some reviewers dismissed its value because it did not produce a statistically strong reduction in all-cause mortality.

Sasieni and Wald argue that this is a misuse of evidence. If a treatment reduces the disease it is meant to prevent and does not create offsetting harm, that should count heavily in its favor.

Avoiding Enormous Studies And Cost

One of the article’s strongest points is its discussion of clinical trial design. The figure on page 2 shows how requiring all-cause mortality can make studies unnecessarily enormous. In their example, if a therapy prevents 70% of deaths from a specific disease that causes only a small share of all deaths, a trial using cause-specific mortality would need about 41,000 participants, whereas a trial using all-cause mortality would need about 1,095,000 participants to show the same benefit with adequate power.

That difference is dramatic. The authors argue that insisting on all-cause mortality in such situations is not just inefficient, it can prevent useful therapies from being recognized at all. The same figure also shows the reverse problem: a trial may appear worth continuing if investigators focus only on all-cause mortality, even when disease-specific results already show that further benefit is unlikely.

Do Not Ignore Harm

Sasieni and Wald are careful to say that focusing on cause-specific outcomes does not mean ignoring harm. Instead, they argue for a more intelligent assessment in which benefits and harms are measured separately and then weighed together.

They give the example of early breast cancer radiotherapy studies, where reduced cancer recurrence was offset by increased cardiac deaths; later improvements in radiotherapy changed that balance by preserving benefit while greatly reducing cardiovascular harm.

They also note that the effect of a treatment on all-cause mortality can vary from one population to another depending on how common the targeted disease is relative to competing risks. This is why all-cause mortality may be a poor basis for comparing therapies across different patient groups.

Does The Intervention Do What It Is Supposed To Do?

The article ends with a broader message about what evidence-based medicine should actually do. Rather than withholding preventive therapies until they can prove an effect on every death from every cause, clinicians and policymakers should evaluate whether an intervention reduces the outcomes it is designed to reduce, whether it introduces serious harm, and whether the overall tradeoff is worthwhile.

In other words, medicine should be analytic rather than simplistic. This article is a concise defense of common sense in clinical research: the best endpoint is not always the broadest one, and good science depends on choosing measures that truly fit the question being asked.

Takeaway

Sasieni and Wald make a case that is both technically sound and clinically relevant. The demand for all-cause mortality evidence as a prerequisite for preventive therapy reflects a misunderstanding of statistical power, disease heterogeneity, and the nature of prevention itself.

In cardiology especially, where interventions like lipid-lowering therapy, blood pressure control, and screening programs target specific, well-characterized causal pathways, cause-specific outcomes are not a shortcut. They are the appropriate and scientifically defensible standard.

Reference:

https://www.ahajournals.org/doi/10.1161/CIRCULATIONAHA.116.023359

Still Have Questions? Stop Googling and Ask Dr. Alo.

You’ve read the science, but applying it to your own life can be confusing. I created the Dr. Alo VIP Private Community to be a sanctuary away from social media noise.

Inside, you get:

-

Direct Access: I answer member questions personally 24/7/365.

-

Weekly Live Streams: Deep dives into your specific health challenges.

-

Vetted Science: No fads, just evidence-based cardiology and weight loss.

Don't leave your heart health to chance. Get the guidance you deserve. All this for less than 0.01% the cost of health insurance! You can cancel at anytime!

[👉 Join the Dr. Alo VIP Community Today]